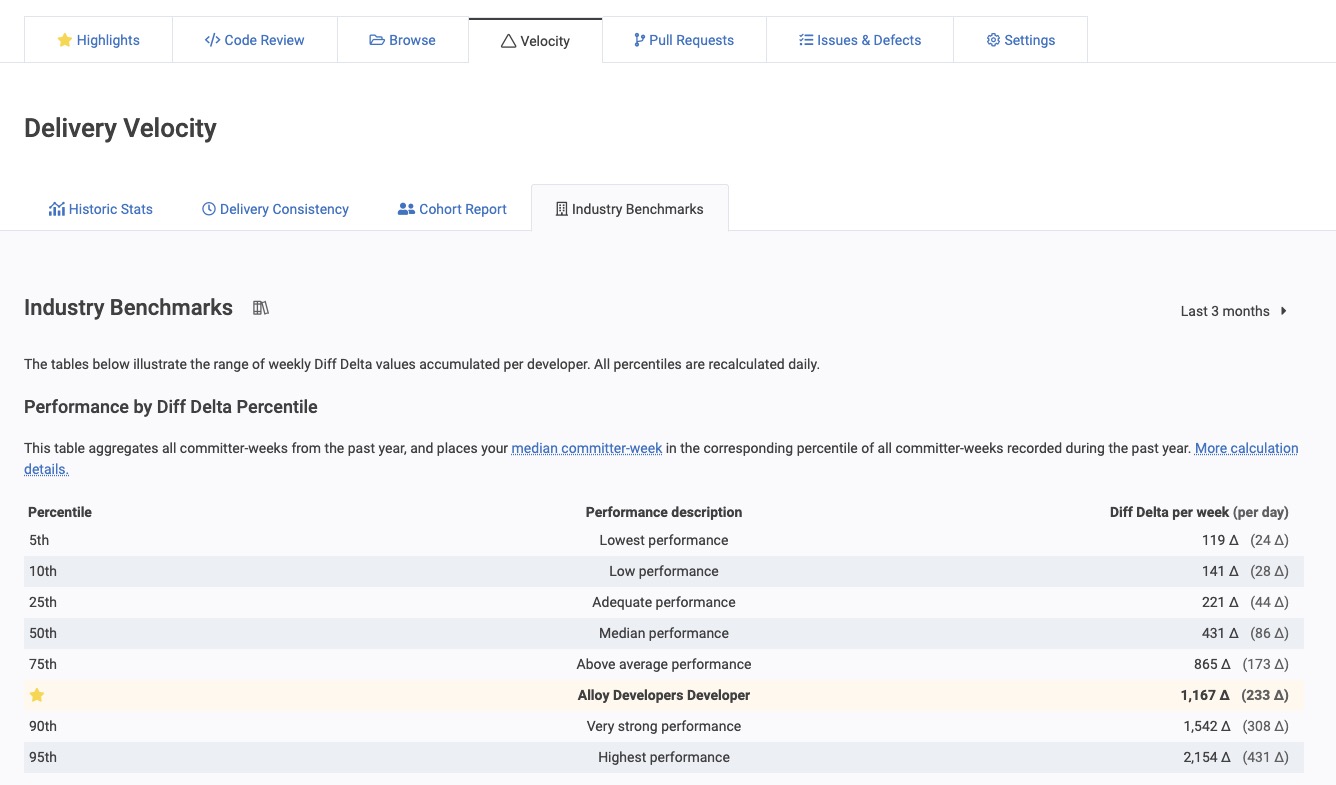

GitClear "Industry Benchmarks," available under the "Velocity" tab when logged in, allows you to compare how the velocity of different-sized companies compares to your own. Here's where you can find it within the GitClear UI (Elite subscription required for access)

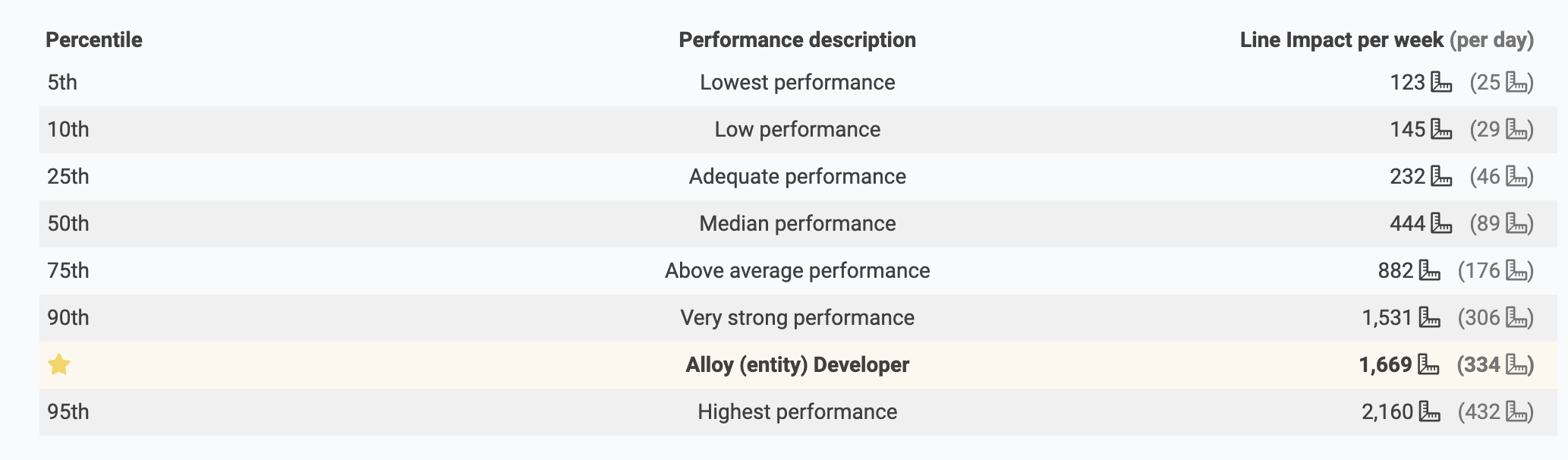

linkPerformance by Diff Delta Percentile

Visualization of site-wide Diff Delta percentiles vs those for the Alloy entity

Both of the tables work by looking at percentiles of "committer-weeks." A committer-week is what it sounds like: one week of an active committer's work, i.e., their Diff Delta. The committer-week is a convenient unit to deal in because it can automatically ignore holiday/vacation breaks (so long as no activity is recorded for the week), it works on an apples-to-apples basis regardless of team size, and it has velocity baked into the unit. It's also convenient because we have millions of committer-weeks recorded, so it's a large enough sample to work in percentiles.

Here's what Wikipedia has to say about percentiles, if you're rusty on thinking in statistics. The rest of this blog assumes that you understand the difference between "median" and "average," and that you know what it means to be in the Xth percentile.

This first table puts the selected resource's median committer-week in the context of various percentiles of customer committer-weeks. We use your median committer-week since that is the most representative measure of how much work could be expected per developer, per week, over the time interval selected.

If your company is slotted below "Median Performance" when viewing these stats, do not despair. The most frequent reason that customers underperform is because we're only processing a subset of the repos in which their committers are working. If we're only measuring a fraction of your total output, that would look like underperformance until the full set of repos is being processed. If all of your repos are being processed and you're still seeing a median committer weeks of less than 400, that can be usable signal that you may want to evaluate your policies.

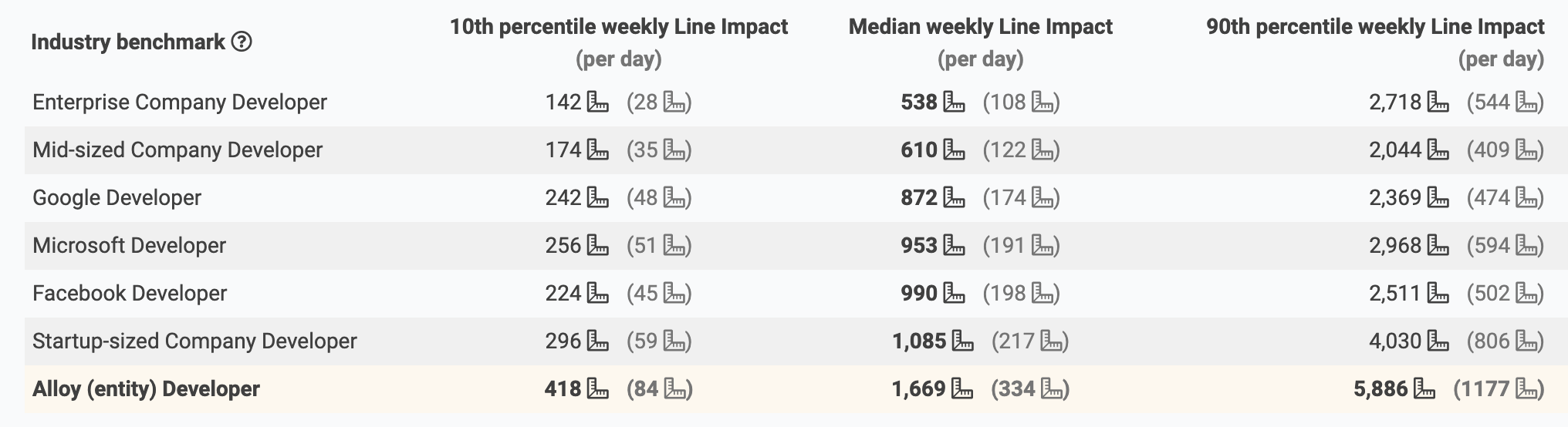

linkBenchmark Diff Delta Percentiles

How the median committer-week of an Alloy Developer compares to other benchmarks

The second table we've debuted puts your team's performance in the context of assorted benchmarks that we've calculated or approximated.

For the "Enterprise" (500+ employees), "Mid-sized" (50-499 employees), and "Startup-sized" (less than 50 employees) segments, we collected data from a collection of ~1m committer-weeks in the history of GitClear customers opted into anonymized data sharing. We classified each committer-week by the size of the company, as deduced from entity settings. The result is being able to see a ballpark range of weekly performance relative to various stages of growth. As is apparent from this table, code evolves more gradually in the enterprise world than the startup world. This likely reflects the added complexity of coordination, and the general lack of system-wide additions or subtractions -- which is somewhat commonplace at startups, but rare in enterprise software.

For the specific company comparables, we leveraged data from Open Repos, triangulating committers on well-known products with the company that was sponsoring that product's development. We then manually confirmed that each committer identified had some documented association with the company in question. If it's not already self-apparent: these stats should be considered a ballpark approximation, much less precise than other calculations we make. Still, even a rough approximation of how development works within well-known companies offers to shed light on how some brands build enduring success. In the future, we hope to analyze how addition/deletion/update and other operations vary between companies, and what an expected amount of legacy refactor is, relative to code base size.

linkLet us know if it's useful!

These charts are already online and available to all Elite-level customers. If find any quirks, or you have ideas on how we can present clearer language, we'd love to hear it, as always.