The "Collaboration Stats" that are available within the umbrella of Pull Request stats GitClear offers can tell you a lot about how a team is operating. We split the page into stats that measure how PR creation is trending over time, and the second half for how PR review is changing.

linkPull Request Collaboration Review Stats

At the bottom half of the "Pull Requests" => "Collaboration Stats" page resides the review stats for your team:

How goes team communication at a meta-level?

What can be learned from each of these?

Average delay until first comment. In business hours (i.e., excluding nights and weekends), how much time elapsed between when a pull request was opened and when it received its first non-bot comment (how to exile your bots as contributors)? The lower the better. Under 8 hours (one business day) is ideal.

Total pull requests reviewed. Usually an analog to "Total pull requests posted," unless your team has a high volume of unreviewed PRs. More is usually considered better.

Average comments left per PR. An analog to "Average comments received per PR" (but useful to have separate when viewing data on an individual-level). Which direction you want this to move in will depend on your team's circumstances.

Total comments left. See previous.

Average time commenting per PR. How long does the average reviewer in the selected team (or individual) spend reviewing a pull request? This calculation is made as follows: for comments that were left without a break of an hour or more between them, how much time elapsed between the initial comment and the final comment? For the initial comment, based on its complexity, how many minutes might that have taken? We sum these values together, and the value shown here is per reviewer over the course of all the rounds of the pull request. The ideal pull request "time spent commenting per reviewer" is purely relative to a team's circumstances. It will generally fall somewhere between 10-90 minutes.

Total pull requests approved. Number of reviewers who have approved a pull request for merge in the selected time range. More is usually considered better

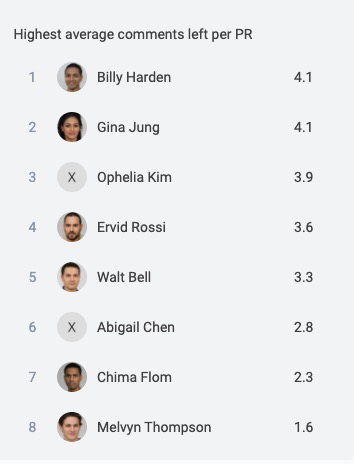

linkHighest average comments left per PR

How engaged were our (programmatically generated) commenters on each PR?

How many comments did each contributor leave per pull request in which they contributed, over the time range and resource selected?

Ideally, a Manager likes to see their veteran team members especially active in leaving comments, since that suggests that knowledge about project conventions and libraries are being effectively disseminated throughout the dev team.

linkPosting Stat Trends

How have salient pull request stats been changing during the chosen time frame?

The top half of the page helps contextualize the health of the developers that submit pull requests among the team & resource that you have selected. What can be learned from each stat?

Average pull request open time. How many business hours (i.e., hours excluding weekends and night time) elapsed on average during the time a pull request was opened and merged, for pull requests closed during the selected time interval.

Total pull requests posted. How many pull requests were opened during this period? GitClear does not harbor an opinion on whether a team should aspire to post more pull requests or fewer, but it is most commonly thought that most work should travel through PRs.

Average comments received per active PR. It's generally thought that receiving fewer comments is better than more comments, since it can indicate that the submitting committer and the reviewers came to the same conclusion about the optimal implementation. However, in practice, it's just as often true that a low "comments per PR" count indicates that reviewers are not being incentivized to submit substantive concerns that they may harbor. Which direction you want this to move in will depend on your team's circumstances.

PRs merged without review. Most managers prefer for this number to be as close to zero as possible, since it suggests that code may have been merged that skirts the conventional review process of the team.

Average rounds of revisions. How many commits were made per merged pull request after the PR was submitted for review? That is, how much work did the committer undertake in response to their teammates feedback? Like "Average comments received," a lower value of this stat can mean either that the PR was a dandy in need of negligible refactoring to be launched to prod OR that the committer received no feedback, OR that the committer did receive feedback but ignored it. It takes an engaged manager to decipher which of these implications best match the observed circumstances. On balance, a lower number tends to indicate better prepared PRs being submitted.

Pull request posting frequency. The sibling of "Total pull requests posted," which you can read about above.

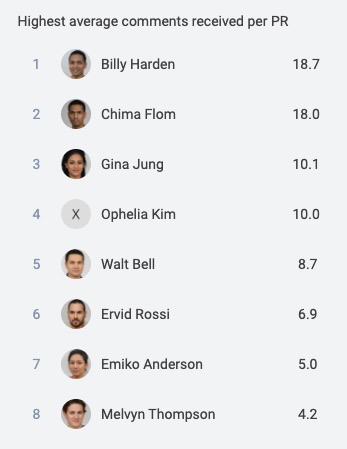

linkHighest comments received per PR

On the right side of the "Pull Request Creation Stats" resides a module built to indicate how much conversation is being directed toward various team members:

Members of the team who have received the most comments per PR posted for review. All of these names and avatars were programmatically generated.

The general expectation is that, the newer a team member is to the team, the higher they will fall on this list. The committers who receive the highest average comments per PR are those who submit work that elicits a chorus of suggestions from their teammates.

For Senior Developers, it can be a signal of objective misalignment when they end up in the upper reaches of the board. Whereas a new developer is expected to receive comments as they get up to speed on conventions, a mature developer should generally be able to anticipate what sort of feedback they would receive before submitting their pull request. If they continue to receive high comment counts, it could suggest that they have failed to heed past admonitions, or that they tend to submit work that elicits others to stop coding and spend time discussing the PR content.

If you hire a new developer who does not end up high on this list, that may suggest benefit to adopting practices such that new team members will get a friendly, timely welcome upon submitting their initial pull requests.