👉 Download this research as a PDF to share with colleagues

When we explain GitClear's code progress metric, Diff Delta, we've grown to expect some level of head tilting and furrowed eyebrows at first. "If it were possible to create a metric that estimated real progress within a repo, don't you think somebody would have noticed and done that by now," we can see them think. Their reservations are fair. Great technical minds excel at questioning big promises & seeing through claims that lack data. At the same time, many engineers & managers recognize that, if such a metric existed, it could offer a potent data point among those that guide long-term decision-making.

The goal of this article is to aim for the heart of what smart decision-makers want to know when deciding how to wield Diff Delta:

What does it mean to tally a lot of Diff Delta?

What are the practical, real world implications of seeing fluctuations in this metric?"

This article takes an empirical approach to answering the question by offering a list of example cases. By the end of the next five minutes, you'll see how products qualitatively transform as their Diff Delta reaches 10k, 100k and 1m units.

link🏫 Diff Delta 101

The least to know about Diff Delta is that it's a metric GitClear has been evolving since 2016 to quantify how much meaningful change occurs in a repo. This 3m video includes a high-level description of how the calculation evolves in response to a stream of commits. Learn about Diff Delta: Derived from First Principles here.

In a nutshell, the metric has been calibrated such that Diff Delta ≈ Cognitive Energy ≈ Change Difficulty ≈ Time Spent. How to estimate the "cognitive energy" implied by a diff? We observe that the energy required to change 50 lines of code varies widely based on the particulars of the change:

Deleting 50 lines that have been in the repo for 3 years is harder than

Deleting 50 lines added a month ago is harder than

Adding 50 lines of new Python code is harder than

Adding 50 lines of new CSS is harder than

Moving 50 lines of code between files

Thus, the Diff Delta of deleting old code is much higher per-line than the Diff Delta of adding greenfield code. And of course, changes that are simply noise (autogenerated code, or lines committed and quickly removed) represent a high percentage of all changed lines (up to 95%, according to our data).

link📈 Diff Delta Benchmarks: Thresholds of Progress

Enough words, let's look at a numeric roundup of how Diff Delta values accumulates over orders of magnitude. You can find live-updated data at our Diff Delta Factors page.

linkPer Day, by Experience Level/Team

Experience Level/Team Size | DD/ Day | DD/Year | Description |

29 | Across all types of projects (startups, mid-sized, and enterprise companies) taken together. Corresponds to 5-10 meaningful lines of code changed per day. | ||

94 | To date, mid-sized companies (having 10-50 developers) are the slowest moving companies we measure. | ||

73 | Microsoft, Google and Facebook comprise most of our measurements of this demographic. One representative day from a (Google) Tensorflow developer included 10 added lines, 20 deleted lines, 5 updated lines, 5 copy/pasted lines, and 10 find/replaced lines across a mix of file types. | ||

110 | One of our biggest data sources, since startups are comparatively eager to get an edge from measurement vs. bigger companies. A representative day includes 350 lines/day added, 200 lines/day deleted, 10 lines/day updated, and 300 lines/day moved. The line counts are much higher relative to DD here because of high churn (startups can burn through code fast). | ||

1,000 | Facebook's React team is staffed by illustrious developers; their rate of evolution approximates a startup despite having to architect infrastructure that is heavily tested & widely distributed (usually factors that slow progress) | ||

3,700 | One of the most active projects & successful projects (as measured by widespread user adoption) in the world | ||

23,300 | Chromium is the upper-end of what we have observed to be possible for a large project/team. This is the single fastest open source project that we measure. |

linkCumulative, by Product

Product/ Company | Diff Delta | Commit Count | Time Range | Description/Notes |

NoteApps.info | 27,000 | 550 | 2020-2022 | Site is currently live to browse what 27k Diff Delta gets you. It has about 5 different types of page. What can't be seen is another 5-10 admin pages and all of the backend code that allows uploading images and such. 5-10k DD is generally the minimum required to yield a simple but robust Ruby on Rails desktop website. |

Huginn | 29,000 | 3,490 | 2013-2023 | Extremely popular (38k Github stars) system for creating automated agents that run tasks upon receiving a signal. A Rails app where the lion's share of the Diff Delta has been spent building |

Shotcut | 162,000 | 5,461 | 2016-2023 | Free, open-source, cross-platform video editor written in C++ and QML. Since GitClear just added QML support in January 2023, the actual Diff Delta for this product may be more like 300-500k once all of the QML has been processed. |

Logseq | 200,000 | 10,727 | 2019-2023 | Popular open source note-taking app. App itself has recorded 141k Diff Delta as of February 2023, but is given credit in this table for 200k, based on the average user's utilization of at least 2-3 plugins to achieve their desired functionality. Each Obsidian plugin is likely to be 10-30k Diff Delta, so 200k is a usable estimate of the volume of code change that the project has experienced to date. |

GitLens | 282,000 | 4,874 | 2018-2022 | Billed as "Git supercharged," it is one of the most popular VS Code plugins ever released, with 20m installs and counting as of GitLens Release 13, circa January 2023. It's rare to see a plugin for another application eclipse 500k Diff Delta. This plugin has undergone more development than any other we have reviewed recently. |

Akaunting | 381,200 | 7,242 | 2017-2023 | Well-designed accounting app written in PHP over 6 years w/ 6k Github stars. Home page advertises "cash flow", "easy invoicing," "transaction categories" and "expense tracking." Biggest existing areas of Diff Delta have been Javascript (30k DD), views/HTML (40k), PHP app infrastructure (65k). Project has done a good job at removing code, about 30% of DD is code that has been deleted over the years. |

Bonanza.com | 570,000 | 8,240 | 2007-2010 | This was our first Rails startup, building itself from nothing up to 10,000 sellers, around 10m items for sale, and raising a $1m fundraising round (in May 2010). View the site as it existed after its first 500k Diff Delta via the Wayback machine. Not shown: an eBay importer that cost about 50k Diff Delta unto itself. Highly interactive listing form required another 100k Diff Delta. |

Standard Notes | 650,000 | 15,001 | 2018-2022 | A well-respected, security-driven cross-platform note taking app. Since 2020, their DD velocity has surged to a high of 290k in 2022 alone. Their product that you see as of January 2023 is what's possible with 650k cumulative DD. |

GitClear | 1,100,000 | 29,949 | 2017-2022 | GitClear has evolved at a steady rate of about 150k-250k per year since we launched to customers in 2019. This has allowed us to build a Highlights page, Code Review system, Pull Request stats, Industry Benchmarks, and the rest of the reports that you see listed in the GitClear documentation. We have about 20 reports in all thus far. |

Amplenote | 1,200,000 | 37,543 | 2017-2022 | Amplenote is a multi-platform (web, Android, iOS, with desktop version forthcoming) app that allows for note taking, task lists, and calendar integration (including fully synchronized schedule with Google or Outlook calendar). Its featureset compares favorably to the best note taking apps on the market. |

Ruby on Rails | 2,300,000 | 71,000 | 2004-2019 | One of the most widely used web frameworks. Had accumulated 2.3m DD as of Rails version 6. Rate of evolution has ebbed somewhat since mid-2010s |

Microsoft VS Code | 6,000,000 (est) | 104,951 | 2016-2022 | Microsoft VS Code has consistently averaged 900k-1.1m throughout the 5 years we have tracked them. Since they started in 2016, this adds up to about 6m cumulative Diff Delta to build arguably the most popular IDE of the 2020s. |

link🧠 Techniques for Estimating Expected Progress

Looking over the data, there are substantive lessons for both teams and individuals that want to calibrate their energy toward the greatest ROI.

link1. Small teams move faster (about 50% faster than average enterprise team dev)

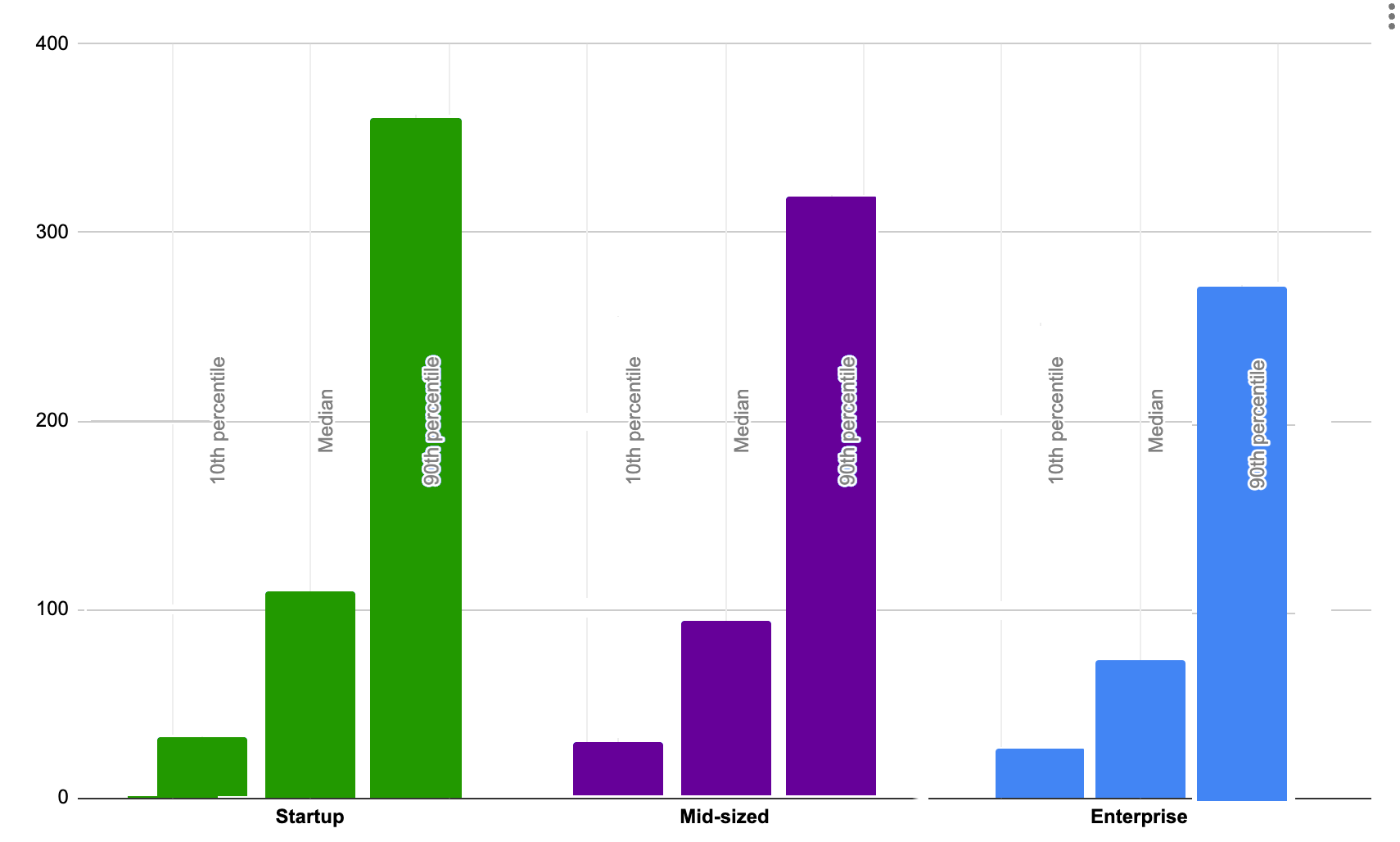

The range of observed daily Diff Delta for 10th- to 90th-percentile developers at companies of different sizes

All our data is unambiguous on this point: the smaller the team, the greater the per-developer cumulative code change will be observed.

This makes sense, given how many extra non-development tasks are required at an Enterprise-sized (100+ developer) company. Whether it's estimating sprints, assigning story points, pair programming, reviewing PRs, coordinating concurrent work on a system, training new hires, tracking down bugs/tech debt introduced by junior devs, building consensus on how to evolve the infrastructure, etc -- in so many ways, coordination costs conspire against large development teams.

The good news is that developers working in an Enterprise context have the potential to boost their rate of code change by operating more like a Startup. By observing the different circumstances behind more-productive weeks and less-productive weeks, an attentive PM at any-sized company can build a team that operates at the speed of a Startup. Microsoft is one tantalizing proof point of what is possible: as of February 2023, their VS Code project averages a higher Diff Delta per developer than a startup. Microsoft has done a commendable job of identifying the recipe that allows developers to operate autonomously and manifest enthusiasm for the project.

Well-constructed Enterprise companies like Amazon and Microsoft have learned that they can maximize throughput by drawing boundaries between teams that minimize cross-team coordination. When executed successfully, this method strips away many of the liabilities that would otherwise bog down developers at Enterprise-sized companies.

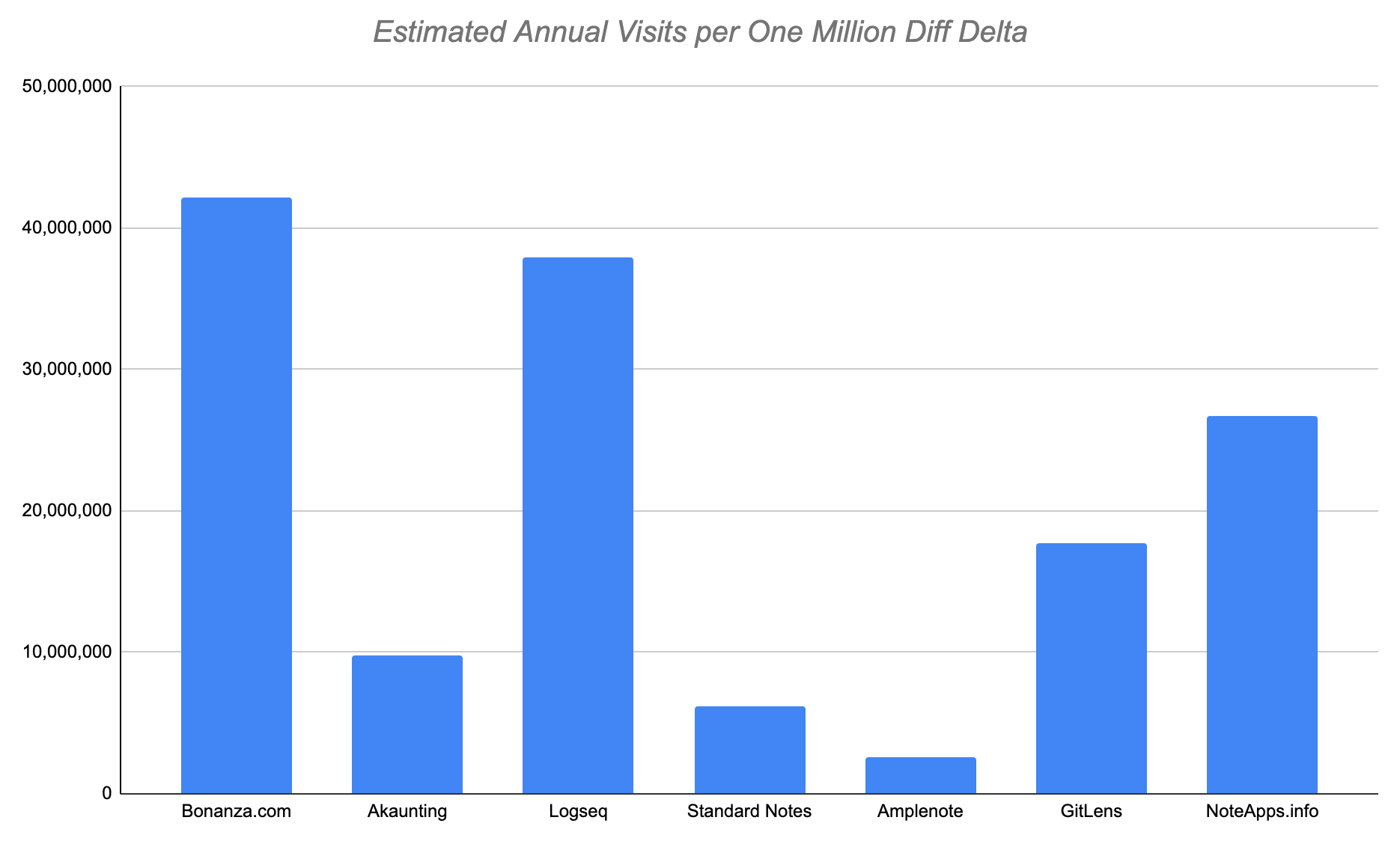

link2. One million Diff Delta empirically corresponds to ~20 million user visits per year

But, in practice, most products never reach one million Diff Delta. So, it's more applicable for the average Project Manager to know that "300,000 Diff Delta should generate at least 20k user visits (or downloads)" per month. This estimate comes from analyzing the N=7 set of products for which we have years of data measuring Diff Delta and estimated user visits.

In our 7-product sample analyzed, extrapolating one million Diff Delta averages 20m user visits or downloads per year, but is skewed by outliers.

The median product should expect to see something closer to 20k visits per 300k Diff Delta

When you invest developer energy building a product, you should expect to see a proportional increase in user interest. How much uptick in user interest should you expect to see, if you are investing your developers' energy wisely? One data-anchored way to approach the question is to check how the ratio of "user visits per 1m Diff Delta" compares between products.

Our data suggests that if you are building a consumer-oriented product (even for niche consumers like bookkeepers and software developers), you should start to become concerned if you have accumulated 300,000 Diff Delta and you aren't generating at least 20k combined user visits and downloads per month. Fast-growing apps like Bonanza.com and Logseq set examples that are obnoxious to be compared to, e.g., Logseq had an estimated 500k visitors per month within their first 200k Diff Delta. Those heights are to be reached only if you're fortunate enough to pick an important problem and take an approach that people immediately realize fits their needs. These companies are the exception.

The average growth trajectory our data shows is more like Standard Notes or Amplenote. These products set off with lofty ambitions and had already invested 100-200k Diff Delta in infrastructure before they even began to reach users that saw their value. Amplenote has been the slowest of the batch to convert its development effort into user visits. It's a product that has required years of investment merely to reach the existing platforms (iOS, Android, web) with the expected features (everything Evernote offered). As of early 2023, the product had launched about 1m worth of Diff Delta but had just 200,000 visits per month to show for it.

Since most companies don't publish their Diff Delta or their daily visits, the sample size of companies available to extrapolate from leaves something to be desired as of early 2023. Still, looking at the extents of this data can be instructive to evaluate whether one's development effort is connecting with the audience. This footnote describes the nitty gritty details of how we collected this data for the curious.

link3. One million Diff Delta corresponds to a rough average of $750,000 in revenue per year

Here's a fun question for the bottom-line-minded CEOs and Founders of new companies: how much development should you expect will be necessary before the company starts generating appreciable revenue?

How much annual revenue were companies generating per million Diff Delta authored by their developers?

How accurate are these estimates? As close-guarded as companies are with their visitor data, they're twice as secretive when it comes to revenue data. So the graph we're showing utilizes a variety of extrapolation tactics you can read about in depth here to get a more nuanced sense for the validity of these implications. tl; dr don't take this too seriously until we have 10 sample data points. That said, the correlation between products' Diff Delta and their revenue is consistent enough that we believe this data can help new users form a loose range of expectations for their product.

If we consider the prototypical 300,000 Diff Delta product that an average Startup can build in two years time, this startup should expect to be generating $100-$150k annually by the time they have accumulated 300k Diff Delta. By the time the product has grown to a million Diff Delta, the median annual revenue grows to $750k in this data set.

If you are a Manager or Founder of a project that has accumulated 300k of Diff Delta without reaching $10k per month in revenue, it suggests that you may be under-investing in your marketing efforts. Another possibility is that you are entering an industry with many established products, where the barrier to securing the company's first few customers is higher than the norm. This is more common when selling products to large Enterprise companies, or to individuals at a high price point. These companies tend to require a great deal of evidence that the product under consideration is not just useful, but also reliable and widely used. Quantitatively speaking, it might imply 500k Diff Delta needed before the company starts to close Enterprise contracts.

link4. How does your company's growth compare to these?

Are you the Founder or VP of a company that is working to break into an existing industry? If so, we will offer three months of free Pro subscription to GitClear, contingent upon disclosing your revenue and monthly visitors through screenshots we can use to authenticate your claimed numbers. We extend this offer to all companies sizes, even pre-revenue companies, because we believe that in the long-term, the public good is served by having more free data available. The larger our sample size, the more robust we can expect these estimations to become. Stay tuned for the next edition of this report in 2024.