linkarena.ai

Keeps leaderboards based on users' comparisons of models. Seems to be the largest purveyor of real-world model evaluations. They run a podcast. Site has the feel of a having started from a hobbyist. Derived from human rankings. Weakness could be if the AI companies develop bots that can submit ratings on behalf of their own answers?

As of April 2026:

opus-4.6-thinking wins Software & IT Services with 1542 ($5/$25/M) vs for 1524 gemini-3.1.pro-preview ($2/$12/M) vs 1516 for gpt-5.2-latest ($1.75/$14/M). That equates to difference of 1.2% between opus and gemini, 1.7% for opus vs gpt-5.2

opus-4-6-thinking also in the lead in Writing/Language with pretty similar numeric breakdown as Software

opus-4.6 leading Business, Management & Financial Ops with a similar 1%ish difference over gpt-5.2

"Code Arena" a separate category from "Software & IT Services," with opus-4.6-thinking in the lead at 1546 vs 1456 for next best readily available competitor, gemini-3.1-pro-preview

React comparison: opus-4.6-thinking

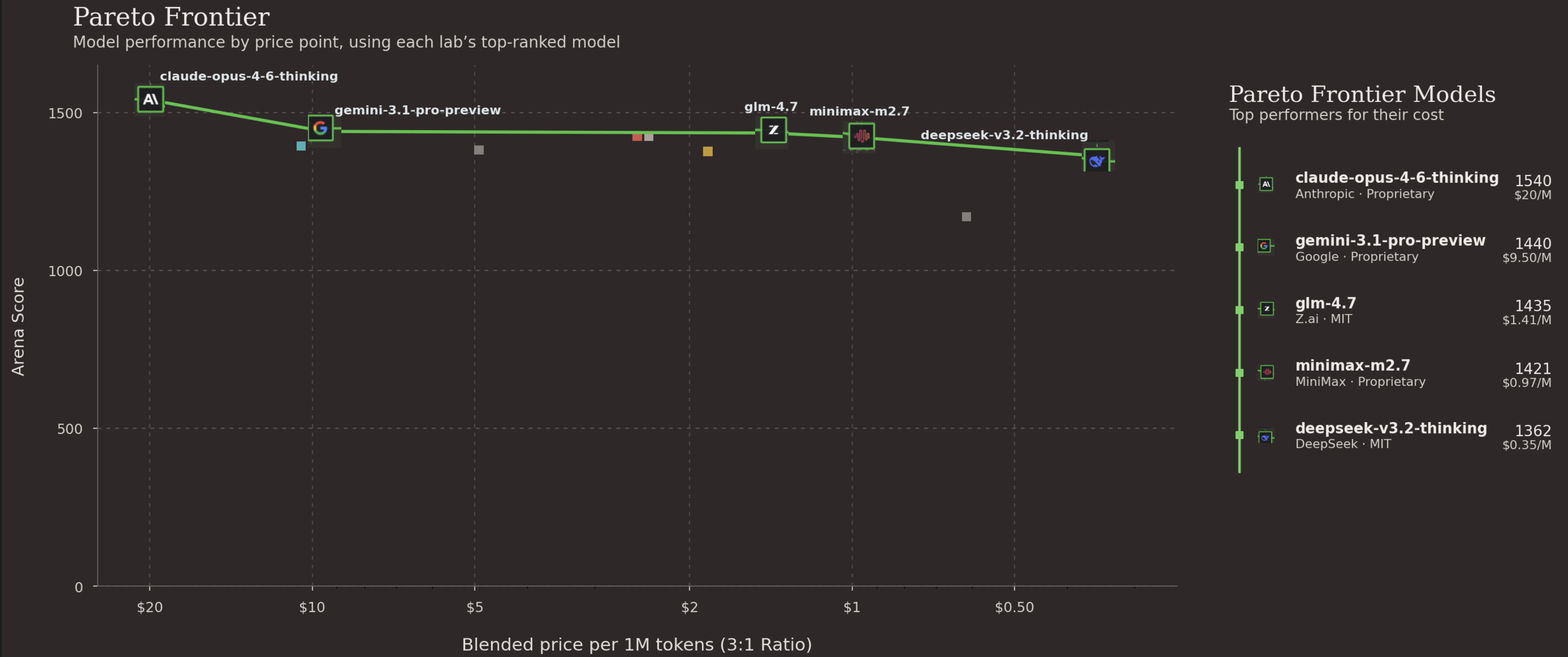

And here is the model as arena.ai presents it, which I dislike because graphs without zeroes are graphs that obscure absolute difference.

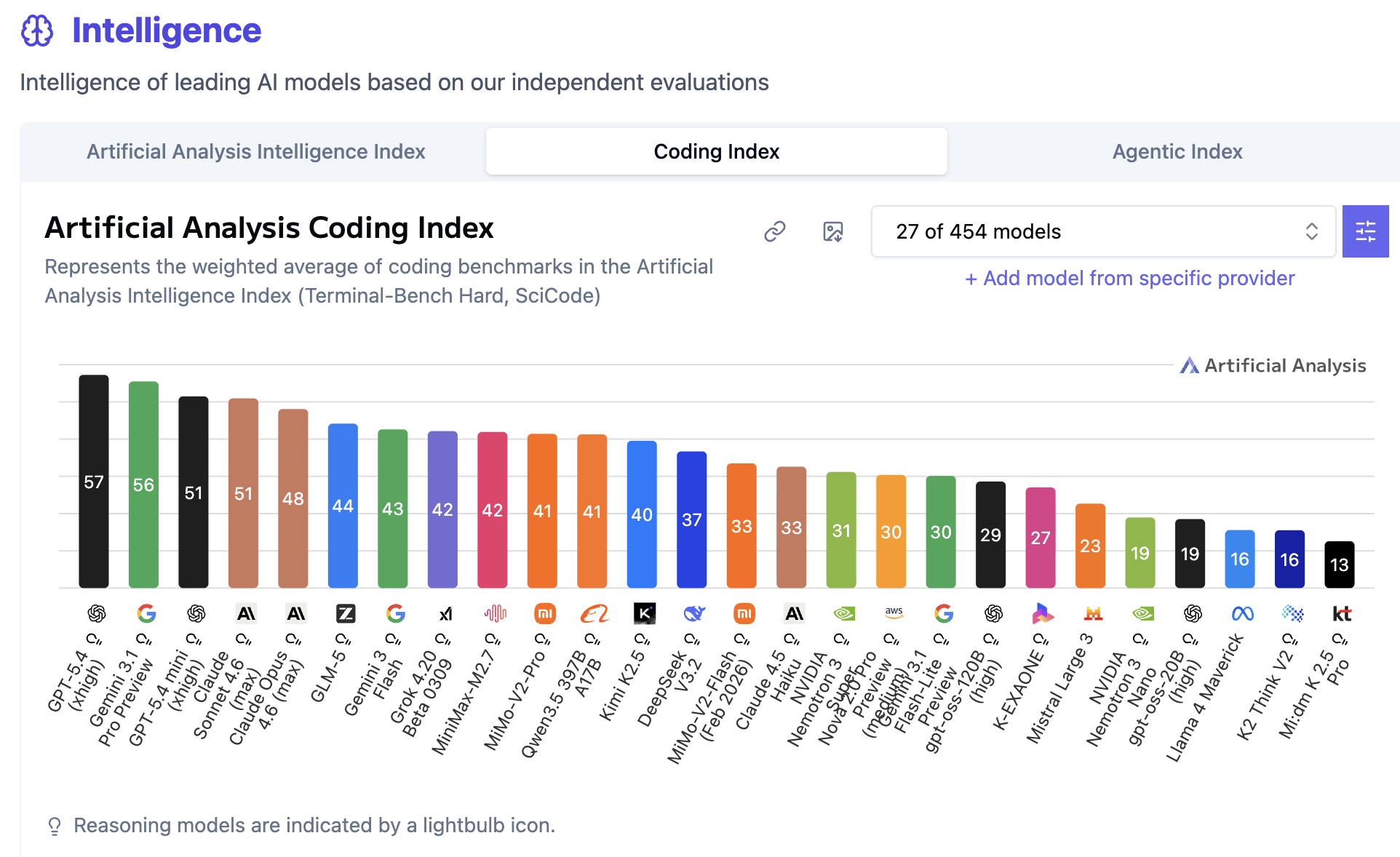

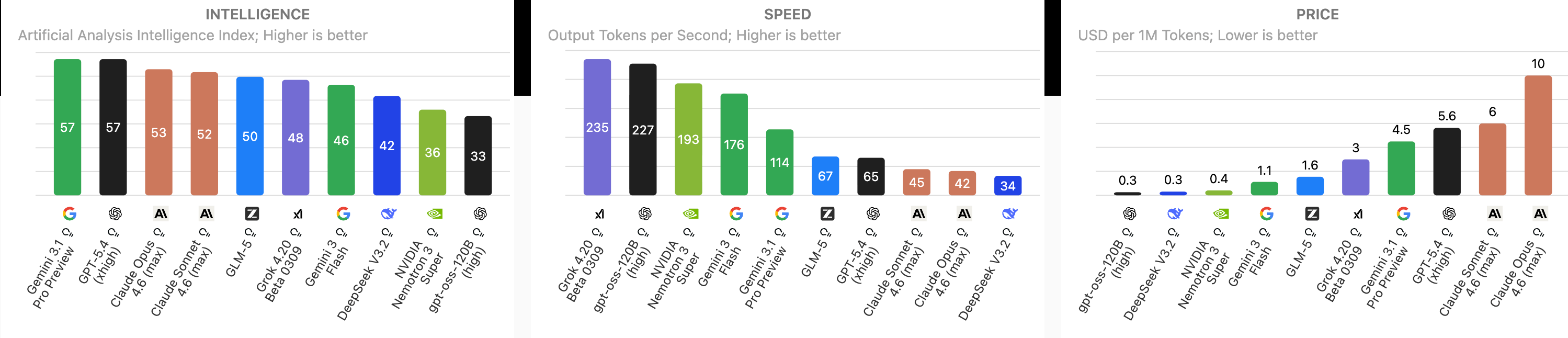

linkartificialanalysis.ai

Their evaluation methods:

The Intelligence Index: They calculate a composite score derived from a weighted average of modern benchmarks. They use 10 difficult evaluations, including Humanity’s Last Exam (PhD-level questions), SciCode (complex scientific Python programming), AA-Omniscience (their custom benchmark measuring exact factual recall vs. hallucinations), and GPQA Diamond. For statistical validity, they claim to run tests with multiple repeats (usually 10) to reduce variance, evaluating models on a strict "pass@1" metric (did it get the exact answer right on the first try).

Their incentives and business model rely on B2B market intelligence and enterprise data sales. They offer a free, public-facing website that drives traffic and establishes authority. They make money by selling: premium reports, database/API access, consulting & workshops.

AI Model & API Providers Analysis | Artificial Analysis

From my experience, kinda fishy for Opus 4.6 to be substantially behind gpt-5.4, but their method says: