linkQ4 2025 and Q1 2026 Code LLM Ratings

Each rating is 1-5. When evaluating binary tasks, failure is a 1, success is a 5, partial success is a 3.

Problem | OpenAI ChatGPT 5.3/5.4 | Anthropic Opus 4.5/4.6 | Google Gemini 3 Pro | Cursor Composer 1.5 |

Write a complicated test | 4 | 1 | ||

Setup repo import polling feedback | 4 | 4 | ||

Fix the emitProgress bug (part 2) | 3.5 | 4 | 2 | 1 |

Write a test, implement a heuristic for single note request | 2.5 | 3.5 | 3.5 | 2 |

Calculate a per-note heuristic | 4 | 3.5 | 3 | |

Ensure we don't search for filler words | 2 | 2.5 | ||

Allow looping tests to run individually | 1 | 1 | 5 | 5 |

Implement and run a JS test for tag & update time | 1 | 1 | 1 | 1 |

Average score | =average(above) 2.57 | =average(above) 2.79 | =average(above) 2.79 | =average(above) 2.25 |

These ratings are meant to offer data that helps others evaluate state-of-the-art LLMs on real world coding challenges (rather than synthetic benchmarks, which the world has plenty of). Between Q4 2025 and Q1 2026.

The backdrop for these evaluations is a Javascript note Search Agent (on GitHub), available free to any Amplenote user to allow translating your criteria into a table of relevant notes derived from examining your entire note history (11,000+ notes in my test case).

link8. Write a complicated test

Query from February 2026

Add a base context block as a test to @vendor/gems/llm_metrics/test/unit/github_copilot_llm_usage_metric_test.rb that runs PopulateLlmMetrics with `ai_usage_provider_ems` with value `github_copilot` and `process_dates` of `Date.new(2026, 2, 2)` (after using Timecop.freeze to freeze the test date to February 5, so we will build LlmResourceStat records for the `daily` date_type_em).

On the first pass of processing PopulateLlmMetrics, we should ascribe the metrics from `vendor/gems/llm_metrics/test/fixtures/users-organization-1-day-2026-02-02.ldjson` to committer_id 0, combining the two developers' stats into a single LlmUsageMetric record and confirming that the sum of the columns in the fixture file matches the LlmUsageMetric that is persisted. The test should confirm that a daily LlmResourceStat exists for entity_resource, but not for organization_resource or repo_resource (since we can't derive what repo or organization this activity took place in)

Next, we should create a nested context block that makes the same call to PopulateLlmMetrics that was tested in the first "should" block. This will again ascribe all of the Copilot usage activity to a single committer_id with BLANK_COMMITTER_ID. Then, we should use CommitterFactory.create to create committers whose user names match the user names found in the fixture file ("wbharding" and "jordoh"). We should call PopulateLlmMetrics again with the same parameters as initially. This should detect that we can now associate the LlmUsageMetric records with specific committers. It should delete the initial LlmUsageMetric record, so now there is no LlmUsageMetric with committer_id of BLANK_COMMITTER_ID, nor any LlmResourceStat with that committer_id. There should instead be one LlmUsageMetric per developer for the date in question, and we should confirm that the `all_suggest_count` of LlmUsageMetric matches the values from the fixture. LlmResourceStat should have a `all_suggestion_count` that also matches for `entity_resource`, but `organization_resource` and `repo_resource` should still be 0 for that date.

Finally, we should set up a third nested context block that uses CommitFactory.create to create a commit for each of the developers (each in a different repo+organization) on Date.new(2026,2,2) at noon. The creation of this commit should trigger a stat rebuild that will reallocate the LlmUsageMetric to be associated with both the committer AND the repo_resource that the committer contributed to on the date in question. This final test should also confirm that each committer also has LlmResourceStat records with an organization_resource and entity_resource.

linkGemini Pro 3

Rating: 1 of 5. Fails to nest tests per prompt instruction. Makes up scopes that don't exist.

Files changed: Just the test file, as expected, but without any of the nesting requested, and with some made-up methods.

linkOpenAI ChatGPT 5.3

Rating: 4 of 5.

Files changed: Just the test file. Includes unnecessary OrganizationFactory.create with superfluous attributes that would be hard to interpret for maintainers. Doesn't confirm the values of the reassigned metrics in later nested test. Uses weird method to stub enterprise discovery instead of using the method utilized throughout other tests. On the plus side, it only consumed about 20% as long as the Opus agent. Probably cost 5-10% as much as the Opus query, and could almost certainly iterate to a workable test in less time and tokens than Opus, but requires human intervention to get there.

linkAnthropic Opus 4.6

Rating: 3 of 5. Similar-looking quality to ChatGPT 5.3, but takes 10x longer and presumably 10x more tokens.

Files changed: Just the test file. Checks against magic numbers, against explicit request in Agents.md. Like ChatGPT, creates unnecessary organizations & fails to check scope sums in final nested test.

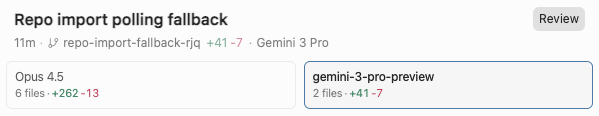

link7. [#llm-on-llm-challenge] Setup repo import polling fallback

Query

Update select_repos to fall back to polling if it hasn't received an ActionCable message within 5 seconds. Polling interval should be 5 seconds, checking if any new repos have been processed and adding them to the existing component. Consider the case where multiple xhr requests might be made that overlap to retrieve repos and organizations that may be processed.

Consider also that the user may have applied a "Quick import" path filter that needs to be applied before showing incoming repos from the Javascript polling.

The polling should be implemented as a local function called via useEffect from a component that mediates repo importing, but preferably has 300 lines or less in it so far.

In heterogenous enterprise environments, it's time-consuming to iterate on a well-tested ActionCable implementation. Thus, we seek a fallback, which means understanding a wide swath of Javascript to make a useful suggestion. Didn't have the wherewithal to evaluate this for all the candidates, but glad I got to compare two opinions, given this level of divergence between solutions:

Better solution: 50 lines changed...or 270 lines changed?

By the (made-up now) principle of LCILCTM ("less code is less code to maintain"), advantage gemini 3 pro preview, prior to reviewing the code.

Upon actually reviewing the code...

linkGemini Pro 3

Rating: 4 of 5. It does succeed at polling organizations, with minimal code footprint. I expect that another prompt or two will get it to handle the case without organizations. As mentioned, initial preference is for less code vs. more.

Files changed

repo-quick-import.js Wants to add a new function to ensure that filtering mechanism will rapidly propagate to the RepoImporter shell. Looks important, considering RepoImporter otherwise doesn't know about the state of the quick filter component, which presumably controls which repos are shown by import? The check for 'object' and newValue feels more complex than a Senior Dev should desire.

repo-importer.js Pros: Terse; abides desire to only begin polling after cable failure; if it works, it is delightfully minimalistic. Cons: Probably won't work in initial form, since it requires organizations being known, which won't be the case initially; storing last action cable message as a ref instead of state is atypical; not a single line of explanation (probably matches surroundings?)

linkOpus 4.5

Rating: 4 of 5. I can't rate it below Gemini when it handled the most important case (importing orgs), but it feels like it might take a couple more well-crafted prompts to crunch this one enough to match Gemini's desirable properties, whereas that version only needs to add a bit more. This is a split decision.

Files changed

repo-quick-import.js Similar approach to Gemini to propagate state back to the parent component

routes.rb Adds a new method specifically for polling, beyond the existing repos_for_organization that has historically been used

remote_connections_controller.rb Good that it reused repos_for_organization rather than repeating it, as earlier generations might have done. Aside from wasting some vertical lines, this is an effective means to ensure that new organizations are added as they get discovered, working around the shortcoming of the Gemini proposal

use-repo-polling.js Nice to have it separated, but to dedicate 160 new lines to a component that has to be maintained is not desirable in a world where the Gemini version achieved this in less than 40 lines.

repo-importer.js There are surely existing methods to diffuse orgs/repos into the importer state, so minus points for implementing a new parallel mechanism to maintain

link6. Fix the emitProgress bug (part 2)

Query (run concurrently)

Here is the developer's documentation on renderEmbed, onEmbedCall and callAmplenotePlugin: https://www.amplenote.com/help/developing_amplenote_plugins/actions#renderEmbed

Your mission is to update the implementation of renderEmbed or emitProgress, such that, as subsequent calls to emitProgress are made, the renderEmbed HTML will update. Currently, the page renders once when the user initially visits it, but none of the plugin's subsequent emitProgress calls are reflected in the HTML visible to the user within the embed (unless they navigate to another page and return to the embed).

When a teammate was asked about his ideas to resolve the problem, he guessed

> Generally the way I've handled that is passing messages (window.onAmplenoteMessage IIRC inside the embed) into the embed telling it to update/re-render. Otherwise, yes, calling openEmbed should re-render (though note the args from the call will be passed and stored, so if using args to store state need to pass in latest to every call).

linkCursor Composer 1

Rating: ❌ 1/5. Binary failed to work, and added significant tech debt in the process of doing so.

Files changed

render-embed.js Exceptionally lengthy & difficult-to-maintain-looking implementation, but to its credit, it avoids pulling.

search-agent.js Nice touch that it remembers to remove the old implementation

linkChatGPT 5.2

Rating: ✅ 3.5/5. It does work, but it's inefficient, and its test is unnecessary.

Files changed

render-embed.js Looks superficially similar to Opus, though the refresh interval of 500ms seems like it won't 'be great for performance 😬 Also, magic number isn't desirable.

plugin.js adds an onEmbedCall in incorrect alphabetic location. Somewhat difficult to grasp its purpose.

render-embed.test.js In principle, adding tests is a good idea. In this case, I'd be inclined to skip this one since it doesn't test any long-term truth

linkGemini 3 Pro

Rating: ❌ 2/5. Binary fail, but it still gets a point for not being obtuse, and potentially an iteration away from a more efficient solution than the poller-based ones.

Files changed

render-embed.js. Concise but ineffective to resolve bug

search-agent.js Probably closer to working than current, but does succeed at compelling embed to re-render.

linkOpus 4.5

Rating: ✅ 4/5. Not the most concise, but effective. It did consume at least 10x more tokens than the others while responding, as it relentlessly (and eventually, effectively) pursued a means to access the doc link via curls

Files changed

render-embed.js Adds a lengthy polling mechanism with 500ms, which is tested/proven to resolve the bug. Critically, it imparts a mechanism to prevent running the polling indefinitely in the background. Negative points for beginning it's polling with a naughty magic number.

plugin.js Modest addition, respecting alphabetic location within file

search-agent.js Tidies up now-superfluous code

link5. Write a test (and implement) a heuristic for when a user requests a single note

Query used

Add a test for phase1, to prove that if the user query requests "the note that..." that LLM criteria will return an expected result count of "1", and that will be propagated through SearchAgent if the user left the "result count" field blank

After running this test 6x, I began to get the feeling that the versions were learning from one another, in particular since the second ChatGPT and Gemini tries were significantly better than their first tries. In the future, I'll begin running these experiments concurrently instead of the current & previous "propose & revert" methodology.

linkChatGPT 5.2

Rating: 🟡 5 of 10. The result files shown here were from my second query. On my first query, which I didn't capture, had it choosing to implement a standalone function that looked for the exact string given (🤦♂️) rather than modifying the LLM query as it did on its second try. Suggests that Cursor might offer extra context to LLMs upon repeated queries, even though it says it is reverting. Anyway, somewhat lower rating to the second version for inducing such a broad code review (5 files changed), for taking longer than 5 mins to generate, and for having the LLM return what should just remain a behind-the-scenes constant. Tests were inadequate.

Files changed

query-breakdown.js Sensible prompting addition, except that it should default to null rather than the number that happens to be the current const default

user-criteria.js Given that Opus' version passed tests w/o changing this file, this seems superfluous. Further interrogation yields weird back-and-forth.

plugin.js Good catch to have the user messaging reflect AI judging note count, though it's generally going to be 10 notes the AI chooses, so it's not perfect messaging

query-breakdown.test.js Delightfully concise, but we need more than a single test in the main file that deserves tests

search-agent.test.js This test file is already overcrowded, not a great place to add a test.

linkGemini 3 Pro

Rating: 🟡 7/10. Most concise LLM prompt wording of the candidates. Good catch of the bug in plugin.js. Loses 3 points for such a lame first-pass as the test file, but it amended it nicely with a short prompt, as described below.

Files changed

query-breakdown.js Ideal, concise LLM language change

plugin.js Good catch of an existing wrinkle that would foil effective ability for LLM to ever be obeyed

phase1-result-count.test.js All the mocking of LLM responses seems to block the effectiveness of the test to actually gauge that the LLM language works. If human emotions were being ascribed, it seems like it is cheating or ensuring the test will pass at the expense of not. After asking it to knock that shit off, it made a much better test.

linkOpus 4.5

Rating: 🟡 7/10. Impressive test & nicely concise. It missed the bug that plugin.js would always pass the default search value, though, so LLM wouldn't be used. Took around 3-4 mins?

Files changed

query-breakdown.js On the wordy side, and insufficient emphasis on null, though good that it is mentioned

query-breakdown.test.js Good implementation of 3 reasonably-sized tests with little obvious redundancy, the only one that checked the ultimate result was a single note. However, it failed to test the non-single cases that Gemini inferred were desirable.

linkCursor Composer

Rating: 🟡 4/10. Missed the bug in plugin.js, overly wordy prompt, test was limited

Files changed

query-breakdown.js Overly wordy, insufficient emphasis on the likely null case

search-agent.test.js Is already pretty polluted with randomness, would be preferable to locate it in a more specific file.

query-breakdown.test.js Good starter tests, but should also include tests for non-one numbers & default 10

link4. Calculate a per-note heuristic

Query used

Update the note preliminary ranking so that the larger the note's bodyContent is, the more dilute the match, the lower its score. Each candidate note should be assigned a heuristic that relates the density of matches within the note as follows:

1. Each match of a primary keyword in the title = 5 points

2. Each match of a primary keyword in the body = 1 point

3. Each match of a secondary keyword in the title = 2 points

4. Each match of a secondary keyword in body = 0.5 points

5. Each primary keyword that includes a part of tag hierarchy = 1 point (e.g., a keyword is "business suitcase" and the tag is "business/rigors" should give one point because the first piece of the tag, "business," exists within the keyword)

6. Each secondary keyword that includes a part of tag hierarchy = 0.5 points

The sum of all points should be divided by (noteContentLength / 500). This way, the highest preliminary ranked notes are those with the highest density of overlap of title & body content. Additional implementation considerations:

* Each number should be defined as a constant, not a magic number

* Regardless of which keyword initially matched the note, the note should always evaluate to the same value since deriving the note's preliminaryScore considers every primary & secondary keyword, plus the note title, body and tags

* The heuristic should be stored as a column of searchCandidateNote. It needs a descriptive name, such as "keywordDensityEstimate" or "matchDensity"

* When looking for body matches, we should only use the searchCandidateNote's bodyContent, which is a subset of the full content for the note. However, the calculation of noteContentLength / 500 should use the full length of the note body that was stored

linkGemini 3 Pro

Took its sweet time to consider all the elements of the query (probably a good thing, given the level of detail provided?). The first five minutes after submitting query were spent with Cursor outputting ever-more text into the "Exploring" status area. After 10 minutes, Cursor said it had stopped hearing from Gemini. 😞 Re-submitted query. Follow-up attempt yielded calculated scores that look pretty reasonable.

Rating: ✅ 6 of 10. Seems to follow all the rules, while adding a modest amount of code within a well-labeled function. Still passes tests. Does not apply calculated heuristic method after phase2, when it could be relevant to decide which notes are most deserving to be LLM-analyzed in phase4.

File changes:

Meat of implementation put into one nicely modular method of SearchCandidateNote. Not alphabetized per Cursor rules. Does not use a constant for the "500" magic number. But cursory review looks like it would work

Updates executeSearchStrategy to apply heuristic in ordered results returned by phase2

Added new constants, as requested

linkChatGPT 5.2

Like Gemini, took 5+ minutes to evaluate, much of which was spent before code changes starting appearing. Unlike Gemini, it did not crap out and require re-submit. Ultimately changes 140 lines (70% add, 30% delete) across only two files, most of it happening in phase3, replacing the existing rankPrelim logic so we don't have it redundant like Gemini. That said, it would be more ideal for the heuristic to be calculated within SearchCandidateNote, and it would be nice if it were used starting in phase2, to sort the search/filter notes returned.

Rating: ✅ 8 of 10. Implements rank in the existing step responsible for sorting results, and thinks to remove all the legacy implementation of phase3.

File changes:

Replaces previous rankPreliminary method with functions that calculate rank based on the factors specified. Again, good to retain logic flowing through the blessed method.

Replaces existing ranking-related constants in phase3. This seems like probably a good idea, such that we don't have dual implementations to estimate the relevancy of a note result.

linkClaude Opus-4.5

Pretty similar to Gemini 3 Pro, albeit finished constructing its suggestions in less time. 150 lines of suggested change (95% added) I'd say that the Opus implementation of keyword density calculation is more readable by virtue of using more helpers to separate out the "keyword counting" logic. Good that it is applying the sort during phase2, but would be better if it also recognized that the work happening in phase2 makes phase3 redundant. Still, I deem this implementation the best starting point, since Opus was able to remove all the now-redundant logic from phase3.

Rating: ✅ 7 of 10.

File changes:

Implements calculateKeywordDensityEstimate in SearchCandidateNote, as I'd have been inclined to

Unclear if the 70 lines dedicated to help functions are really necessary, but in principle it's a good idea to keep the density-calculation method as concise as possible

link3. Ensure that we don't search for filler words

Query used

When parsing the response returned from userSearchCriteria, users are likely to often preface their desired query by mentioning things like "Return notes with" or "Notes that mention" or "Any notes about" before they begin to describe the actual criteria that should be used to go locate a list of notes.

Please update our first LLM query such that it recognizes the tendency of users to begin by mentioning they want notes returned (in phrases like the ones given) but that nothing in this first prefix should be used as a "primary keyword" or "secondary keyword". Then write a new test in a test/search-agent/* file that confirms that user queries like the ones above (followed by the actual description of what the user wishes to search for) do not result in the LLM include "note" or any of the other prefix words in the returned array of primaryKeywords and secondaryKeywords from our first LLM call

linkChatGPT 5.2 (Max Mode) Proposed Changes

Summary: Adds two new methods to query-breakdown and a 50 line test file. Rating: 4/10. Breaks from suggestions in cursor-rules, and creates an ad hoc list of terms that feel like they should prob be a constant? Plus, the most interesting test feels like it would be focusing on submitting many ~10 cases of queries w/ long prefixes, to confirm that the LLM doesn't take the bait. Whether we actually need to post-process the LLM results is questionable (prob not, given potential for false positives), but if we do, that seems much less likely to require testing than "how well the LLM abides its mandate to avoid those words, even when the words weren't explicitly in our list of 'filler words'"?

File changes

Prefixes new instructions in query-breakdown file. Seems like these reccos would make more sense after providing the body of the instructions?

Builds new methods that are called post-LLM to ensure primaryKeywords and secondaryKeywords are not populated with a black list of words (that break the .cursorrules directive to prefer using the full 100 width of characters over per-line args)

Creates test that repeats a bunch of the specific terms it included in the non-const list from query-breakdown file. Also tests that the prompt contains the words that it specified for the prompt, reminiscent of many Matthew tests that would assert on long strings from the time the original label was implemented, but are in no way central to functionality (and unlikely to not exist)

linkOpus 4.5 (Max Mode) Proposed Changes

Method: Stashed the 5.2 proposal, gave it identical query to 5.2.

Summary: Updates query sent to LLM in query-breakdown and creates a 140 line test file. Rating: 5/10. I prefer not implementing the post-hoc filtering since it seems like it should be possible to prompt the LLM to just avoid returning bad words, and a fool's errand to try to enumerate every word that might be filler. The approach to testing is also much closer to Bill's pre-implementation ideal, in that it is actually submitting a bunch of example prompts to see what LLMs return. But the lack of DRYness leaves much to be desired. Upon a follow-up prompt to tell it to DRY the test file, it successfully reduced the file size by half (all 5 tests still exist+pass). It does still call each of the different provides, which is a nice touch.

File changes

Prefixes new instructions in query-breakdown file. Comment from 5.2 still applies. Also adds phrases to other lines to reaffirm that the prefix words should be omitted. Does not add the standalone methods from 5.2.

Creates 141-line test that creates an ad hoc list of filler words, similar to 5.2 in that they're semi-arbitrary and each on their own line, contrary to .cursorrules. Actually tests the response from LLM rather than the post-hoc filter, which hues closer to my intuition than the 5.2 approach.

Nice that it thinks to test the three major providers in most of the tests. Not nice that, rather than using the usual method to retrieve which providers we have a test key for, it just hard-codes the provider names

Serious DRY fail: Among the 5 tests, they each use a different variant of user query (smart!) but then they each have ~12 lines of boilerplate that could seemingly be distilled into a single helper/setup that would reduce the file to just an array of "user queries" and "expected words"

Test status: all 5 pass

link2. Allow looping tests to be run individually

I started with a test that looped over a const with three params per element I want to confirm are processed as expected by LLMs. Since the test was implemented as a loop, the individual elements of the const couldn't be invoked individually, which became more annoying as the size of the const array grew.

This one made for a great differentiator, since it requires the LLM to be able to construe what the user means by "invoked individually via my IDE," which implies either conceptual understanding, or at least getting pretty lucky with interpreting "individually."

As invoked from assorted Cursor models. All models took less than 1 minute to finish, so speed not noted. It felt like Chatgpt-5.1-High might've taken the longest overall (maybe a touch over a min)

Query used

Update query-breakdown-test such that each of the PREFIX_FILTERING_TEST_CASES can be invoked individually via my IDE, while keeping the overall test structure DRY

linkChatGPT 5.2

Rating: ❌ Fails to achieve its goal - the tests are still in a for loop that can't be invoked individually.

File change

Essence of proposed change, plus some more helper method code moved to bottom

linkOpus 4.5

Rating ❌ Fails to achieve its goal - the tests are still in a for loop that can't be invoked

File change

Changes array of arrays to an array of objects - not a bad idea in general, but not what was prompted.

linkGemini 3 Pro

Rating ✅ Figures out to split the array into separate cases, though not quite ideally. Confirmed tests run & pass.

File change

Actually split the array into separate test cases. Though didn't maintain the array structure, and per-test line length leaves something to be desired. Nice that it only added 10 lines cumulatively tho

linkGrok Code

Rating 🟡 Best code structure, but allowed an exception that broke all tests (presumably would've been found if I let it try to run test, but that's not what this trial is evaluating)

File change

Best job of any so far in retaining the const array (for easy modification) while breaking the tests up in a sensible way. Would be winner, but for compile error 🤦♂️

linkChatGPT 5.1-Codex-High

Rating ❌ Pretty bad - made file 30 lines longer, didn't separate tests as is main objective. Didn't break tests at least.

File change

linkClaude Composer 1

Rating ✅ Figures out to split the array, does a nicer job than Gemini of keeping each case readable. Confirmed passing.

File change

Took the contents of the const and made a test case for each. On one hand, surprising that an anon model could beat so many better-known competitors. On the other hand, pretty sad that it left the PREFIX_FILTERING_TEST_CASES in place and unused after its fix 🤦♂️

link1. Implement and run a new JS test

Query

Implement the "tag and updated stamp" test in @test/search-agent/search-agent.test.js , to only return notes that include the tag "business" and were updated within the past 2 weeks. Derive which notes match these criteria, then write a test to confirm the returned notes match the derived notes. Ensure that notes are returned when "business" is the root tag of a hierarchical tag

linkClaude Opus 4.5

Rating: ❌ Test appears to be potentially functional, and not difficult to understand, but all of its hard-coded values will make it prone to brittleness

File change

Added a 30 line test that did not derive the correct results, as requested, but rather hard-coded the expected note names. Also ugly to check expect(result.notes.length) to the hard-coded 3 instead of length of expectedNames

linkChatGPT 5.2

Rating: ❌ Adds a 50+ line test that promises to be challenging to maintain given the heft of its logic requiring interpretation.

File change

Does derive the expected notes, but also mocks llm, adding about 20 lines of complexity to understand/maintain. Also does a fair amount of lines at the end of dubious utility.

linkGemini 3 Pro

Rating: ❌ Adds a 30 line test that explicitly defines the expected notes rather than deriving them per query instructions

File change

A 30 line test very similar to Opus-4.5, down to the hard-coded result but the otherwise nicely simple logic.

linkClaude Composer 1

Rating: ❌

File change

About as good as any of them. Still hard-codes expected names, but seems otherwise reasonably concise. Does more than the rest to confirm that returned notes to in fact include the tag. Though that prob isn't necessary if the results just match the items in the array that have the expected tag.

linkAre these valuable?

It takes some time to evaluate all these questions, so if you find this real-world data useful, please drop a line? Sorry we don't have normal Google login atm, we're a dev-focused company so its all git logins. 🤓